Building a File Analysis Dataset with Python

Using Python and Jupyter Notebooks to build a hierarchical CSV File

Last year I devised some ways of analyzing the history and structure of code in a visual way, but I didn’t document much of that to share with the community. This article explores the process I used to build a CSV file containing the path, extension, project, and line of code count in all source files in a project.

Note: if the idea of getting data out of a git repository interests you, check out my article on Using PyDriller to Extract git Information

This code is effective if you want to quickly analyze code files you have on your hard drive and build a dataset for further analysis and data visualization.

Much of the Python code I’m sharing is heavily borrowed from various StackOverflow answers with my own tweaks and changes. I have tried to credit all sources I used, but this code was built a year ago and I may have forgotten a source or two that was helpful.

Dependencies

I wrote this code using Python 3.8 running on a Jupyter Notebook running Python 3.8. However, Jupyter Notebooks are not required for this code to function.

The code relies on the os library, which is standard to Python, as well as the pandas library for analyzing tabular data.

import pandas as pd

import os

The Code

What follows is a sequential breakdown of the functions needed to accomplish the task of extracting data from the code.

Identifying Files

Analyzing files cannot be done without determining what files to analyze.

I accomplish this using the os library’s listdir method along with several other path and directory methods to recursively traverse the directory tree starting in a given directory.

I also intentionally ignore directories known to contain a large number of ignorable files such as reporting directories, the .git directory, and directories used by the development environment.

# Some directories should be ignored as their output is not helpful

ignored_directories = ['.git', '.vs', 'obj', 'ndependout', 'bin', 'debug']

def get_file_list(dir_path, paths=None):

"""

Gets a list of files in this this directory and all contained directories

"""

files = list()

contents = os.listdir(dir_path)

for entry in contents:

path = os.path.join(dir_path, entry)

if os.path.isdir(path):

# Ignore build and reporting directories

if entry.lower() in ignored_directories:

continue

# Maintain an accurate, but separate, hierarchy array

if paths is None:

p = [entry]

else:

p = paths[:]

p.append(entry)

files = files + get_file_list(path, p)

else:

files.append((path, paths))

return files

Determining if a file contains source code

In order to determine if a file is a source code file and should be included in the results, I chose a naive approach where I only pay attention to files with certain extensions.

This code could benefit from a more comprehensive list or even perhaps some advanced analysis of the contents of the files. However, simply looking at the extension was fine for my purposes and should serve most readers well.

# Common source file extensions. This list is very incomplete. Intentionally not including JSON / XML

source_extensions = [

'.cs',

'.vb',

'.java',

'.r',

'.agc',

'.fs',

'.js',

'.cpp',

'.go',

'.aspx',

'.jsp',

'.do',

'.php',

'.ipynb',

'.sh',

'.html',

'.lua',

'.css'

]

def is_source_file(file_label):

"""

Defines what a source file is.

"""

file, _ = file_label

_, ext = os.path.splitext(file)

return ext.lower() in source_extensions

Counting Lines of Code

After that, I needed to be able to quickly determine the length of a file.

I used a Stack Overflow answer to determine how to efficiently read the number of lines in a file using the following code:

def count_lines(path):

"""

Reads the file at the specified path and returns the number of lines in that file

"""

def _make_gen(reader):

b = reader(2 ** 16)

while b:

yield b

b = reader(2 ** 16)

with open(path, "rb") as f:

count = sum(buf.count(b"\n") for buf in _make_gen(f.raw.read))

return count

This code uses a buffered reader to quickly read through the file buffer and return the total count.

Geeks for Geeks offers a slower but easier to understand implementation for those interested:

def count_lines_simple(path):

with open(path, 'r') as fp:

return sum(1 for line in fp)

Getting File Metrics

After this, I built a function that could take multiple files and generate a list of detail objects for all the files in the list.

Each file details object contained:

- The root directory the analysis started in

- The full path of the file

- The project the file is in (the base directory in the project the file lives inside of)

- The path of the file relative to the

- The name of the file

- The file’s extension

- The number of lines in that file

This is accomplished by looping over all files and folders in the incoming files parameter, then counting the lines of the files using the count_lines function, enumerating any folders, and building path information on each file.

Once all information is known, a file details object is created and appended to the resulting list that is returned once all files have been analyzed.

def get_file_metrics(files, root):

"""

This function gets all metrics for the files and returns them as a list of file detail objects

"""

results = []

for file, folders in files:

lines = count_lines(file) # Slow as it actually reads the file

_, filename = os.path.split(file)

_, ext = os.path.splitext(filename)

fullpath = ''

if folders != None and len(folders) > 0:

project = folders[0]

for folder in folders[1:]:

if len(fullpath) > 0:

fullpath += '/'

fullpath += folder

else:

project = ''

if len(fullpath) <= 0:

fullpath = '.'

id = root + '/' + project + '/' + fullpath + '/' + filename

file_details = {

'fullpath': id,

'root': root,

'project': project,

'path': fullpath,

'filename': filename,

'ext': ext,

'lines': lines,

}

results.append(file_details)

return results

Putting it all together

Finally, I built a central function to kick off source analysis in a given path:

def get_source_file_metrics(path):

"""

This function gets all source files and metrics associated with them from a given path

"""

source_files = filter(is_source_file, get_file_list(path))

return get_file_metrics(list(source_files), path)

This function calls to the other functions to build the resulting list of file details.

I could call this method by declaring a list of directories I’m interested in, and then calling it on those directories.

# Paths should be one or more directories of interest

paths = ['C:/Dev/MachineLearning/src', 'C:/Dev/MachineLearning/test']

# Pull Source file metrics

files = []

for path in paths:

files.extend(get_source_file_metrics(path))

Here I’m analyzing all projects in the src and test directories of the ML.NET repository. I chose to include these as separate paths because they represent two different groupings of projects in this repository.

Saving the Results

Once the files list has been populated, it can very easily be used to create a Pandas DataFrame for tabular analysis. The DataFrame also offers an easy method to serialize the data to a CSV file as shown below:

# Grab the file metrics for source files and put them into a data frame

df = pd.DataFrame(files)

df = df.sort_values('lines', ascending=False)

# Write to a file for other analyses processes

df.to_csv('filesizes.csv')

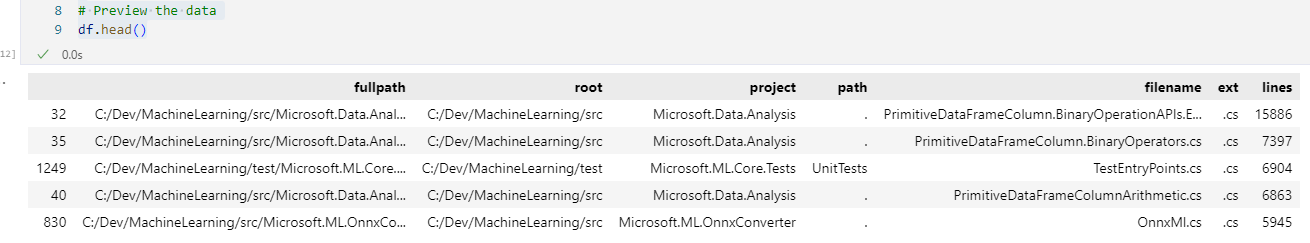

Finally, we can preview the first 5 rows of that dataset using the df.head() method.

In my sample dataset, this produces the following results:

Next Steps

Now that you have hierarchical data saved to a CSV file, and I’ve illustrated a separate way to get information out of a git repository, the next steps will involve merging this data together for analysis and visualization.

Stay tuned for future updates in this series on Visualizing Code.